大数据 | 实验一:大数据系统基本实验 | 熟悉常用的HDFS操作

文章目录

- 📚实验目的

- 📚实验平台

- 📚实验内容

-

- ⭐️HDFSApi

- ⭐️HDFSApi2

- ⭐️HDFSApi3

- ⭐️HDFSApi4

- ⭐️HDFSApi5

- ⭐️HDFSApi6

- ⭐️HDFSApi7

- ⭐️HDFSApi8

- ⭐️HDFSApi9

- ⭐️HDFSApi10

📚实验目的

1)理解 HDFS 在 Hadoop 体系结构中的角色。

2)熟练使用 HDFS 操作常用的 shell 命令。

3)熟悉 HDFS 操作常用的 Java API。

📚实验平台

1)操作系统:Linux;

2)Hadoop 版本:3.2.2;

3)JDK 版本:1.8;

4)Java IDE:Eclipse。

📚实验内容

编程实现以下功能,并利用 Hadoop 提供的 Shell 命令完成相同任务

⭐️HDFSApi

1)向 HDFS 中上传任意文本文件。如果指定的文件在 HDFS 中已经存在,则由用户来指定是追加到原有文件末尾还是覆盖原有的文件;

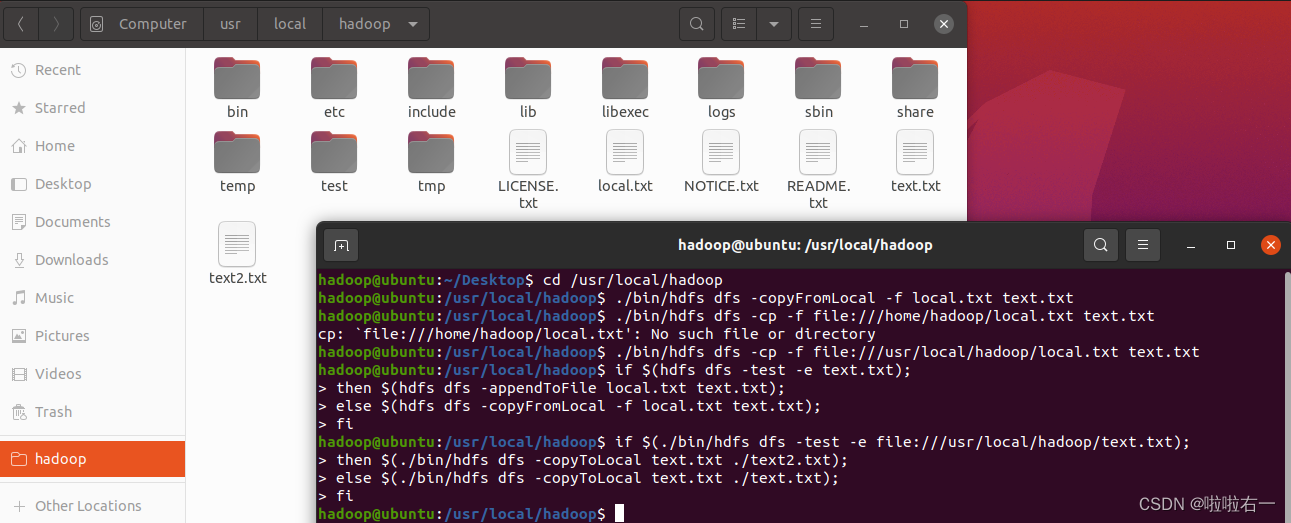

Shell命令

检查文件是否存在,可以使用如下命令:

cd /usr/local/hadoop

./bin/hdfs dfs -test -e text.txt

#执行完上述命令不会输出结果,需要继续输入以下命令查看结果

echo $?

重启了虚拟机开始做这个实验,一开始出现报错,搜索后发现原来是hadoop没开

echo $? 返回上一个命令的状态,0表示没有错误,其他任何值表明有错误(这里显然出错,因为还没有建text.txt文件夹),手动建一个text.txt然后拖到/usr/local/hadoop。

用户可以选择追加到原来文件末尾或者覆盖原来文件

cd /usr/local/hadoop

./bin/hdfs dfs -appendToFile local.txt text.txt #追加到原文件末尾

#touch local.txt

./bin/hdfs dfs -copyFromLocal -f local.txt text.txt #覆盖原来文件,第一种命令形式

./bin/hdfs dfs -cp -f file:///usr/local/hadoop/local.txt text.txt#覆盖原来文件,第二种命令形式

这样会自动建一个local.txt文件

实际上,也可以不用上述方法,而是采用如下命令来实现

if $(hdfs dfs -test -e text.txt);

then $(hdfs dfs -appendToFile local.txt text.txt);

else $(hdfs dfs -copyFromLocal -f local.txt text.txt);

fi

编程实现

package HDFSApi;

import java.util.Scanner;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi

{ /*判断路径是否存在*/public static boolean test(Configuration conf, String path) throws IOException { FileSystem fs = FileSystem.get(conf); return fs.exists(new Path(path)); } /*复制文件到指定路径若路径已存在,则进行覆盖*/public static void copyFromLocalFile(Configuration conf, String localFilePath, String remoteFilePath) throws IOException { FileSystem fs = FileSystem.get(conf); Path localPath = new Path(localFilePath); Path remotePath = new Path(remoteFilePath); /* fs.copyFromLocalFile 第一个参数表示是否删除源文件,第二个参数表示是否覆盖 */fs.copyFromLocalFile(false, true, localPath, remotePath); fs.close(); } /*追加文件内容*/public static void appendToFile(Configuration conf, String localFilePath, String remoteFilePath) throws IOException { FileSystem fs = FileSystem.get(conf); Path remotePath = new Path(remoteFilePath); /* 创建一个文件读入流 */FileInputStream in = new FileInputStream(localFilePath); /* 创建一个文件输出流,输出的内容将追加到文件末尾 */FSDataOutputStream out = fs.append(remotePath); /* 读写文件内容 */byte[] data = new byte[1024]; int read = -1; while ( (read = in.read(data)) > 0 ) { out.write(data, 0, read); } out.close(); in.close(); fs.close(); } /*主函数*/public static void main(String[] args) { Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000"); String localFilePath = "/usr/local/hadoop/local.txt"; String remoteFilePath = "/usr/local/hadoop/text.txt"; String choice = "append";String choice2 = "overwrite"; Scanner in=new Scanner(System.in);String a=in.nextLine();boolean a1= a.contentEquals(choice2);boolean a2=a.contentEquals(choice);//System.out.println(a.contentEquals(choice));//try { /* 判断文件是否存在 */Boolean fileExists = false; if (HDFSApi.test(conf, remoteFilePath)) { fileExists = true; System.out.println(remoteFilePath + " 已存在."); } else { System.out.println(remoteFilePath + " 不存在."); } if ( !fileExists) {//文件不存在,则上传 HDFSApi.copyFromLocalFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已上传至 " + remoteFilePath); } else if (a2) {//选择覆盖 HDFSApi.copyFromLocalFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已覆盖 " + remoteFilePath); }else if(a1) {//选择追加 HDFSApi.appendToFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已追加至 " + remoteFilePath); } }catch (Exception e) { e.printStackTrace(); } }

}

⭐️HDFSApi2

2)从 HDFS 中下载指定文件。如果本地文件与要下载的文件名称相同,则自动对下载的文件重命名;

Shell命令

if $(./bin/hdfs dfs -test -e file:///usr/local/hadoop/text.txt);

then $(./bin/hdfs dfs -copyToLocal text.txt ./text2.txt);

else $(./bin/hdfs dfs -copyToLocal text.txt ./text.txt);

fi

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi2

{ /*下载文件到本地判断本地路径是否已存在,若已存在,则自动进行重命名*/public static void copyToLocal(Configuration conf, String remoteFilePath, String localFilePath) throws IOException { FileSystem fs = FileSystem.get(conf); Path remotePath = new Path(remoteFilePath); File f = new File(localFilePath); if(f.exists()) { //如果文件名存在,自动重命名(在文件名后面加上 _0, _1 ...) System.out.println(localFilePath + " 已存在."); Integer i = 0; while (true) { f = new File(localFilePath + "_" + i.toString()); if (!f.exists()) { localFilePath = localFilePath + "_" + i.toString(); break; }} System.out.println("将重新命名为: " + localFilePath); } // 下载文件到本地Path localPath = new Path(localFilePath); fs.copyToLocalFile(remotePath, localPath); fs.close(); } /*主函数*/public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.default.name","hdfs://localhost:9000");String localFilePath = "/usr/local/hadoop/local.txt"; String remoteFilePath = "/usr/local/hadoop/text.txt"; try { HDFSApi2.copyToLocal(conf, remoteFilePath, localFilePath); System.out.println("下载完成"); } catch (Exception e) { e.printStackTrace(); }}

}

⭐️HDFSApi3

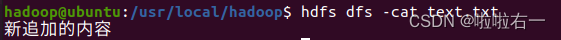

3)将 HDFS 中指定文件的内容输出到终端中;

Shell命令

hdfs dfs -cat text.txt

刚开始先跑shell,运行不报错,但无内容输出(但txt里是有内容的)。编程实现跑了一遍,再回去跑shell就有输出了(?

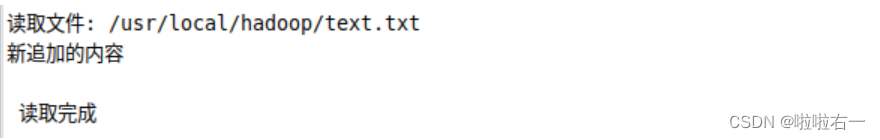

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi3

{public static void cat(Configuration conf, String remoteFilePath) throws IOException {/*读取文件内容*/FileSystem fs = FileSystem.get(conf);Path remotePath = new Path(remoteFilePath);FSDataInputStream in = fs.open(remotePath);BufferedReader d = new BufferedReader(new InputStreamReader(in));String line = null;while ( (line = d.readLine()) != null ) {System.out.println(line);}d.close();in.close();fs.close();}/*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000");String remoteFilePath = "/usr/local/hadoop/text.txt"; // HDFS 路径try {System.out.println("读取文件: " + remoteFilePath);HDFSApi3.cat(conf, remoteFilePath);System.out.println("\\n 读取完成");} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi4

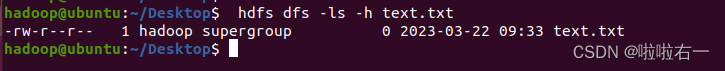

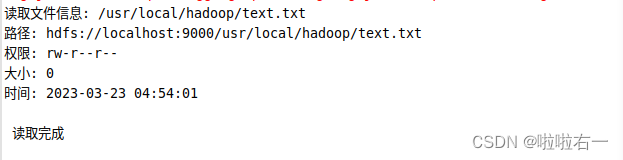

4)显示 HDFS 中指定的文件的读写权限、大小、创建时间、路径等信息;

Shell命令

hdfs dfs -ls -h text.txt

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

import java.text.SimpleDateFormat;

public class HDFSApi4

{/*显示指定文件的信息*/public static void ls(Configuration conf, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);Path remotePath = new Path(remoteFilePath);FileStatus[] fileStatuses = fs.listStatus(remotePath);for (FileStatus s : fileStatuses) {System.out.println("路径: " + s.getPath().toString());System.out.println("权限: " + s.getPermission().toString());System.out.println("大小: " + s.getLen());/* 返回的是时间戳,转化为时间日期格式 */Long timeStamp = s.getModificationTime();SimpleDateFormat format = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");String date = format.format(timeStamp); System.out.println("时间: " + date);}fs.close();}/* 主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000");String remoteFilePath = "/usr/local/hadoop/text.txt"; // HDFS 路径try {System.out.println("读取文件信息: " + remoteFilePath);HDFSApi4.ls(conf, remoteFilePath);System.out.println("\\n 读取完成");} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi5

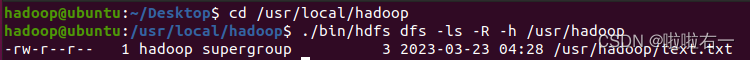

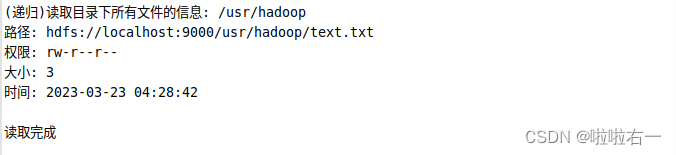

5)给定 HDFS 中某一个目录,递归输出该目录下的所有文件的读写权限、大小、创建时间、路径等信息;

Shell命令

cd /usr/local/hadoop

./bin/hdfs dfs -ls -R -h /usr/hadoop

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

import java.text.SimpleDateFormat;

public class HDFSApi5

{/*显示指定文件夹下所有文件的信息(递归)*/public static void lsDir(Configuration conf, String remoteDir) throws IOException {FileSystem fs = FileSystem.get(conf);Path dirPath = new Path(remoteDir);/* 递归获取目录下的所有文件 */RemoteIterator<LocatedFileStatus> remoteIterator = fs.listFiles(dirPath, true);/* 输出每个文件的信息 */while (remoteIterator.hasNext()) {FileStatus s = remoteIterator.next();System.out.println("路径: " + s.getPath().toString());System.out.println("权限: " + s.getPermission().toString());System.out.println("大小: " + s.getLen());/* 返回的是时间戳,转化为时间日期格式 */Long timeStamp = s.getModificationTime();SimpleDateFormat format = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");String date = format.format(timeStamp); System.out.println("时间: " + date);System.out.println();}fs.close();} /*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000");String remoteDir = "/usr/hadoop"; // HDFS 路径try {System.out.println("(递归)读取目录下所有文件的信息: " + remoteDir);HDFSApi5.lsDir(conf, remoteDir);System.out.println("读取完成");} catch (Exception e) {e.printStackTrace();}}

}

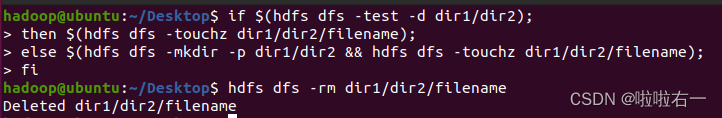

⭐️HDFSApi6

6)提供一个 HDFS 内的文件的路径,对该文件进行创建和删除操作。如果文件所在目录不存在,则自动创建目录;

Shell命令

if $(hdfs dfs -test -d dir1/dir2);

then $(hdfs dfs -touchz dir1/dir2/filename);

else $(hdfs dfs -mkdir -p dir1/dir2 && hdfs dfs -touchz dir1/dir2/filename);

fi

hdfs dfs -rm dir1/dir2/filename #删除文件

编程实现

路径存在的情况(以下代码是路径存在的情况)

路径不存在的情况

目录不存在的情况

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi6

{/*判断路径是否存在*/public static boolean test(Configuration conf, String path) throws IOException {FileSystem fs = FileSystem.get(conf);return fs.exists(new Path(path));}/*创建目录*/public static boolean mkdir(Configuration conf, String remoteDir) throws IOException {FileSystem fs = FileSystem.get(conf);Path dirPath = new Path(remoteDir);boolean result = fs.mkdirs(dirPath);fs.close();return result;}/*创建文件*/public static void touchz(Configuration conf, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);Path remotePath = new Path(remoteFilePath);FSDataOutputStream outputStream = fs.create(remotePath);outputStream.close();fs.close();} /*删除文件*/public static boolean rm(Configuration conf, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);Path remotePath = new Path(remoteFilePath);boolean result = fs.delete(remotePath, false);fs.close();return result;}/*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000");String remoteFilePath = "/usr/local/hadoop/text.txt"; // HDFS 路径String remoteDir = "/usr/hadoop/input"; // HDFS 路径对应的目录try {/* 判断路径是否存在,存在则删除,否则进行创建 */if ( HDFSApi6.test(conf, remoteFilePath) ) {HDFSApi6.rm(conf, remoteFilePath); // 删除System.out.println("删除路径: " + remoteFilePath);} else {if ( !HDFSApi6.test(conf, remoteDir) ) { // 若目录不存在,则进行创建HDFSApi6.mkdir(conf, remoteDir);System.out.println("创建文件夹: " + remoteDir);}HDFSApi6.touchz(conf, remoteFilePath);System.out.println("创建路径: " + remoteFilePath);}} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi7

7)提供一个 HDFS 的目录的路径,对该目录进行创建和删除操作。创建目录时,如果目录文件所在目录不存在,则自动创建相应目录;删除目录时,当该目录为空时删除,当该目录不为空时不删除该目录;

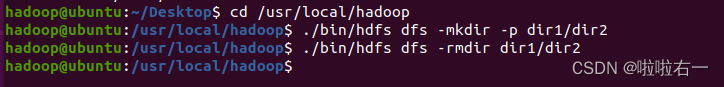

Shell命令

cd /usr/local/hadoop

./bin/hdfs dfs -mkdir -p dir1/dir2

./bin/hdfs dfs -rmdir dir1/dir2

#若为非空目录,强制删除语句如下

./bin/hdfs dfs -rm -R dir1/dir2

编程实现

目录不存在于是创建

目录存在且为空,删除

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi7

{/*判断路径是否存在*/public static boolean test(Configuration conf, String path) throws IOException {//访问获取hdfs文件系统数据FileSystem fs = FileSystem.get(conf);return fs.exists(new Path(path));}/*判断目录是否为空true: 空,false: 非空*/public static boolean isDirEmpty(Configuration conf, String remoteDir) throws IOException {FileSystem fs = FileSystem.get(conf);Path dirPath = new Path(remoteDir);//获取hdfs文件路径RemoteIterator<LocatedFileStatus> remoteIterator = fs.listFiles(dirPath, true);//获取当前路径下的文件,得到file类型的数组return !remoteIterator.hasNext();//hasNext表示文件有内容,用这个判断目录是否为空}/*创建目录*/public static boolean mkdir(Configuration conf, String remoteDir) throws IOException {FileSystem fs = FileSystem.get(conf);Path dirPath = new Path(remoteDir);boolean result = fs.mkdirs(dirPath);//mkdirs,创建fs.close();return result;}/*删除目录*/public static boolean rmDir(Configuration conf, String remoteDir) throws IOException {FileSystem fs = FileSystem.get(conf);//访问获取hdfs文件系统数据Path dirPath = new Path(remoteDir);//获取hdfs文件路径/* 第二个参数表示是否递归删除所有文件 */boolean result = fs.delete(dirPath, true);//delete,删除fs.close();return result;}/*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000"); String remoteDir = "/usr/hadoop/test/dir"; Boolean forceDelete = false; try {/* 判断目录是否存在,不存在则创建,存在则删除 */if ( !HDFSApi7.test(conf, remoteDir) ) {HDFSApi7.mkdir(conf, remoteDir); //创建System.out.println("创建目录: " + remoteDir);} else {if ( HDFSApi7.isDirEmpty(conf, remoteDir) || forceDelete ) { // 目录为空或强制删除HDFSApi7.rmDir(conf, remoteDir);System.out.println("删除目录: " + remoteDir);} else { // 目录不为空System.out.println("目录不为空,不删除: " + remoteDir);}}} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi8

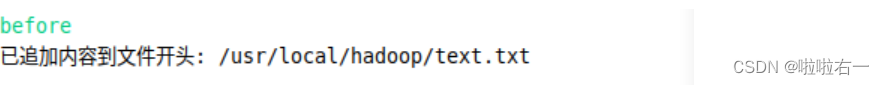

8)向 HDFS 中指定的文件追加内容,由用户指定内容追加到原有文件的开头或结尾;

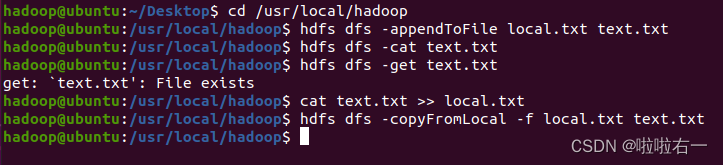

Shell命令

#追加到原文件末尾

cd /usr/local/hadoop

hdfs dfs -appendToFile local.txt text.txt

#追加到原文件开头

hdfs dfs -get text.txt

cat text.txt >> local.txt

hdfs dfs -copyFromLocal -f local.txt text.txt

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

import java.util.Scanner;

public class HDFSApi8

{/*判断路径是否存在*/public static boolean test(Configuration conf, String path) throws IOException {FileSystem fs = FileSystem.get(conf);return fs.exists(new Path(path));}/*追加文本内容*/public static void appendContentToFile(Configuration conf, String content, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);//获取数据Path remotePath = new Path(remoteFilePath);//获取路径/* 创建一个文件输出流,输出的内容将追加到文件末尾 */FSDataOutputStream out = fs.append(remotePath);//追加文件内容out.write(content.getBytes());//把字符串转化为字节数组out.close();fs.close();}/*追加文件内容*/public static void appendToFile(Configuration conf, String localFilePath, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);//获取数据Path remotePath = new Path(remoteFilePath);//获取路径/* 创建一个文件读入流 */FileInputStream in = new FileInputStream(localFilePath);/* 创建一个文件输出流,输出的内容将追加到文件末尾 */FSDataOutputStream out = fs.append(remotePath);/* 读写文件内容 */byte[] data = new byte[1024];int read = -1;if(in!=null){while ( (read = in.read(data)) > 0 ) {out.write(data, 0, read);}}out.close();in.close();fs.close();}/*移动文件到本地移动后,删除源文件*/public static void moveToLocalFile(Configuration conf, String remoteFilePath, String localFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);//获取数据Path remotePath = new Path(remoteFilePath);//获取路径Path localPath = new Path(localFilePath);//获取路径fs.moveToLocalFile(remotePath, localPath);//移动文件到本地}/*创建文件*/public static void touchz(Configuration conf, String remoteFilePath) throws IOException { FileSystem fs = FileSystem.get(conf);//获取数据Path remotePath = new Path(remoteFilePath);//获取路径FSDataOutputStream outputStream = fs.create(remotePath);//创建输出文件outputStream.close();fs.close();}/*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000"); conf.set("dfs.client.block.write.replace-datanode-on-failure.policy","NEVER");String remoteFilePath = "/usr/local/hadoop/text.txt"; // HDFS 文件,output fileString content = "新追加的内容\\n";String choice = "after"; //追加到文件末尾String choice2 = "before"; //追加到文件开头Scanner in=new Scanner(System.in);//输入流String a=in.nextLine();boolean a1= a.contentEquals(choice2);//判断内容是否相等boolean a2=a.contentEquals(choice);//判断内容是否相等try {/* 判断文件是否存在 */if ( !HDFSApi8.test(conf, remoteFilePath) ) {//路径不存在System.out.println("文件不存在: " + remoteFilePath);} else {//有这个文件if ( a2 ) { // 追加在文件末尾HDFSApi8.appendContentToFile(conf, content, remoteFilePath);System.out.println("已追加内容到文件末尾" + remoteFilePath);} else if ( a1 ) { //追加到文件开头/* 没有相应的 api 可以直接操作,因此先把文件移动到本地*//*创建一个新的 HDFS,再按顺序追加内容 */String localTmpPath = "/usr/local/hadoop/tmp.txt";// 移动到本地HDFSApi8.moveToLocalFile(conf, remoteFilePath, localTmpPath);// 创建一个新文件HDFSApi8.touchz(conf, remoteFilePath); // 先写入新内容HDFSApi8.appendContentToFile(conf, content, remoteFilePath);// 再写入原来内容HDFSApi8.appendToFile(conf, localTmpPath, remoteFilePath); System.out.println("已追加内容到文件开头: " + remoteFilePath);}}} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi9

9)删除 HDFS 中指定的文件;

Shell命令

hdfs dfs -rm text.txt

编程实现

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import java.io.*;

public class HDFSApi9

{/*删除文件*/public static boolean rm(Configuration conf, String remoteFilePath) throws IOException {FileSystem fs = FileSystem.get(conf);Path remotePath = new Path(remoteFilePath);boolean result = fs.delete(remotePath, false);fs.close();return result;}/*主函数*/public static void main(String[] args) {Configuration conf = new Configuration();conf.set("fs.default.name","hdfs://localhost:9000"); String remoteFilePath = "/usr/local/hadoop/text.txt"; // HDFS 文件try {if ( HDFSApi9.rm(conf, remoteFilePath) ) {System.out.println("文件删除: " + remoteFilePath);} else {System.out.println("操作失败(文件不存在或删除失败)");}} catch (Exception e) {e.printStackTrace();}}

}

⭐️HDFSApi10

10)在 HDFS 中,将文件从源路径移动到目的路径。

Shell命令

第九题刚好把text.txt删了,这里用的是上头建的dir(shell和编程实现移来移去了属于是

hdfs dfs -mv /usr/dir /usr/hadoop/test

编程实现

(两种情况)

package HDFSApi;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import java.util.Scanner;public class HDFSApi10

{public static void main(String[] args) {try {Scanner in = new Scanner(System.in);System.out.println("请输入你要移动的文件地址");Path srcPath = new Path(in.next());System.out.println("请输入你的文件移动目的地址");Path dstPath = new Path(in.next());Configuration conf = new Configuration(); conf.set("fs.default.name","hdfs://localhost:9000"); FileSystem fs = FileSystem.get(conf);if (!fs.exists(srcPath)) {System.out.println("操作失败(源文件不存在或移动失败)");} else {fs.rename(srcPath, dstPath);System.out.println(" 将文件 " + srcPath + " 移动到 " +dstPath);}in.close();}catch (Exception e) {e.printStackTrace();}}

}

把HDFSApi(就第一题)编程部分重新跑一边后边的就能重新调试。