深度学习----DenseNet

1. 结构图

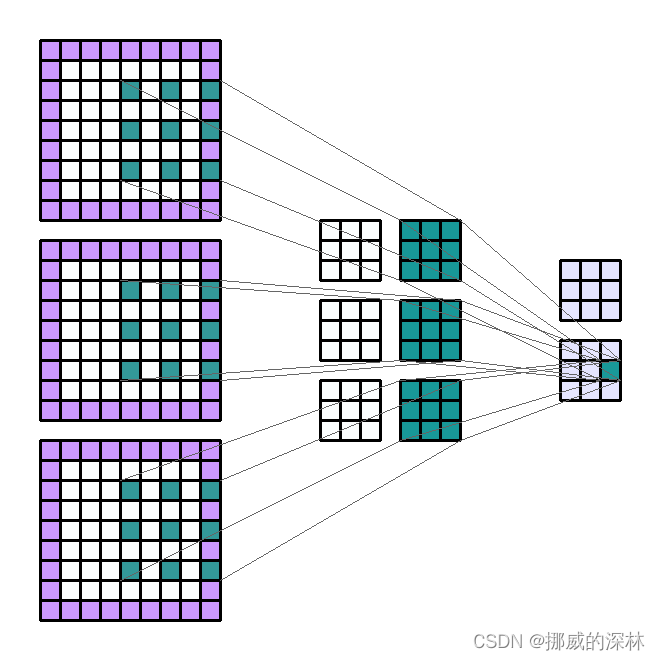

Input Shape : (3, 7, 7) — Output Shape : (2, 3, 3) — K : (3, 3) — P : (1, 1) — S : (2, 2) — D : (2, 2) — G : 1

The parts of this post will be divided according to the following arguments. These arguments can be found in the Pytorch documentation of the Conv2d module :

- in_channels (int) — Number of channels in the input image

- out_channels (int) — Number of channels produced by the convolution

- kernel_size (int or tuple) — Size of the convolving kernel

- stride (int or tuple, optional) — Stride of the convolution. Default: 1

- padding (int or tuple, optional) — Zero-padding added to both sides of the input. Default: 0

- dilation (int or tuple, optional) — Spacing between kernel elements. Default: 1

- groups (int, optional) — Number of blocked connections from input channels to output channels. Default: 1

- bias (bool, optional) — If

True, adds a learnable bias to the output. Default:True

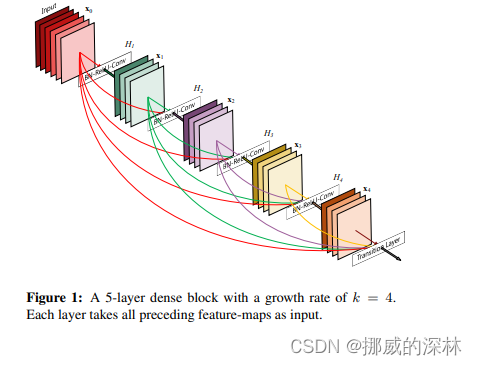

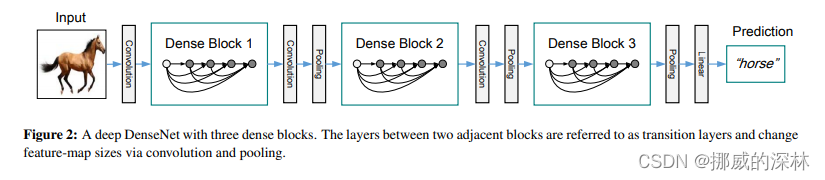

- 在Fig 1中, the last layer is called the translation layer. this layer is responsible for the reducing of the dimension (如图 2, pooling)

- 尽管该技术可以阻断identity backpropagation, 但是这仅仅发生在DenseNet block中的部分layers, 并不影响梯度流

- DenseNet 的代码结构主要分为以下三块

#. DenseLayer: 其主要在 DenseBlock 中完成一个单个的layer

#. DenseBlock:

#. TransitionLayer

2. 建立模型

class DenseLayer(nn.Module)def __init__(self, c_in,bn_size, growth_rate, act_fn):super().__init__()self.net = nn.sequential(nn.BatchNorm2d(c_in),act_fn(),nn.Con