刘二大人《Pytorch深度学习实践》第十一讲卷积神经网络(高级篇)

文章目录

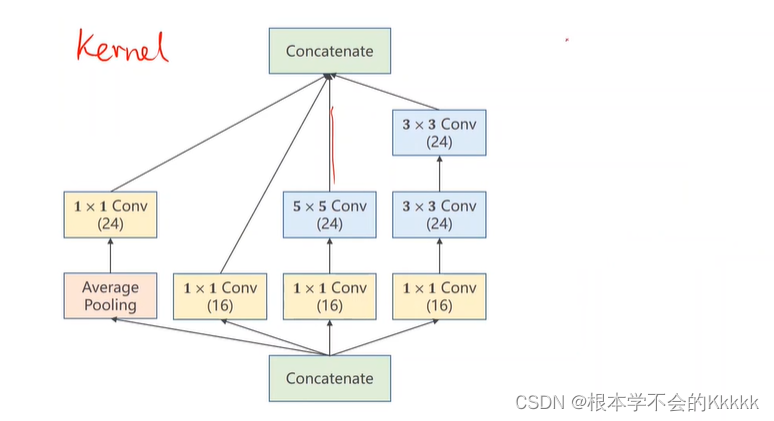

- Inception-v1实现

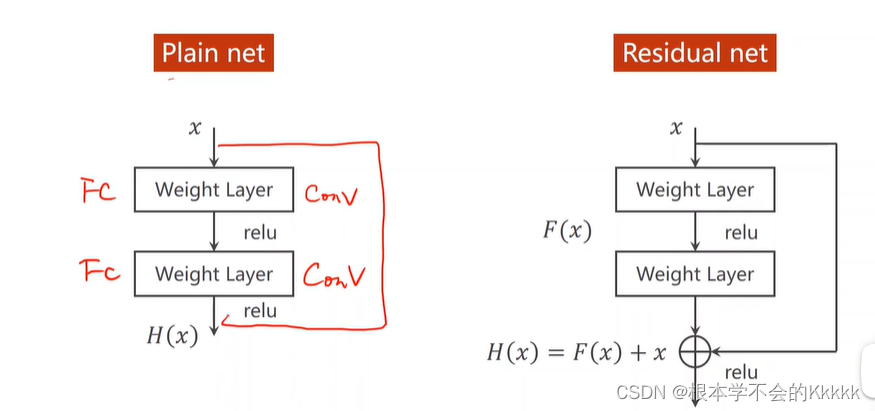

- Skip Connect实现

Inception-v1实现

Inception-v1中使用了多个11卷积核,其作用:

(1)在大小相同的感受野上叠加更多的卷积核,可以让模型学习到更加丰富的特征。传统的卷积层的输入数据只和一种尺寸的卷积核进行运算,而Inception-v1结构是Network in Network(NIN),就是先进行一次普通的卷积运算(比如55),经过激活函数(比如ReLU)输出之后,然后再进行一次11的卷积运算,这个后面也跟着一个激活函数。11的卷积操作可以理解为feature maps个神经元都进行了一个全连接运算。

(2)使用1*1的卷积核可以对模型进行降维,减少运算量。当一个卷积层输入了很多feature maps的时候,这个时候进行卷积运算计算量会非常大,如果先对输入进行降维操作,feature maps减少之后再进行卷积运算,运算量会大幅减少。

import torch

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize ((0.1307,), (0.3081,))

])train_dataset = datasets.MNIST (root='./dataset/mnist/', train = True, download= True, transform = transform)

train_loader = DataLoader (train_dataset, shuffle = True, batch_size = batch_size)

test_dataset = datasets.MNIST (root='./dataset/mnist/', train = False, download= True, transform = transform)

test_loader = DataLoader (test_dataset, shuffle = False, batch_size = batch_size)class InceptionA (torch.nn.Module):def __init__(self, in_channels):super (InceptionA, self).__init__()self.branch1x1 = torch.nn.Conv2d (in_channels,16, kernel_size=1)self.branch5x5_1 = torch.nn.Conv2d (in_channels, 16, kernel_size=1)self.branch5x5_2 = torch.nn.Conv2d (16, 24, kernel_size=5, padding=2)self.branch3x3_1 = torch.nn.Conv2d (in_channels, 16, kernel_size=1)self.branch3x3_2 = torch.nn.Conv2d (16, 24, kernel_size=3, padding=1)self.branch3x3_3 = torch.nn.Conv2d (24, 24, kernel_size=3, padding=1)self.branch_pool = torch.nn.Conv2d (in_channels, 24, kernel_size=1)def forward (self, x):branch1x1 = self.branch1x1 (x)branch5x5 = self.branch5x5_1 (x)branch5x5 = self.branch5x5_2 (branch5x5)branch3x3 = self.branch3x3_1(x)branch3x3 = self.branch3x3_2(branch3x3)branch3x3 = self.branch3x3_3(branch3x3)branch_pool = F.avg_pool2d (x, kernel_size=3, stride=1, padding=1)branch_pool = self.branch_pool (branch_pool)outputs = [branch1x1, branch5x5, branch3x3, branch_pool]return torch.cat (outputs, dim = 1)class Net (torch.nn.Module):def __init__(self):super (Net, self).__init__()self.conv1 = torch.nn.Conv2d (1, 10, kernel_size = 5)self.conv2 = torch.nn.Conv2d (88, 20, kernel_size = 5)self.incep1 = InceptionA (in_channels=10)self.incep2 = InceptionA (in_channels=20)self.mp = torch.nn.MaxPool2d (2)self.fc = torch.nn.Linear (1408, 10)def forward (self, x):in_size = x.size (0)x = F.relu (self.mp (self.conv1(x)))x = self.incep1 (x)x = F.relu (self.mp (self.conv2(x)))x = self.incep2 (x)x = x.view (in_size, -1)x = self.fc (x)return xmodel = Net()# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)# training cycle forward, backward, updatedef train(epoch):running_loss = 0.0for batch_idx, data in enumerate(train_loader, 0):inputs, target = dataoptimizer.zero_grad()outputs = model(inputs)loss = criterion(outputs, target)loss.backward()optimizer.step()running_loss += loss.item()if batch_idx % 300 == 299:print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))running_loss = 0.0def test():correct = 0total = 0with torch.no_grad():for data in test_loader:images, labels = dataoutputs = model(images)_, predicted = torch.max(outputs.data, dim=1)total += labels.size(0)correct += (predicted == labels).sum().item()print('accuracy on test set: %d %% ' % (100*correct/total))if __name__ == '__main__':for epoch in range(10):train(epoch)test()Skip Connect实现

跳连结构解决了梯度消失的问题

import torch

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

batch_size = 64

transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize ((0.1307,), (0.3081,))

])train_dataset = datasets.MNIST (root='./dataset/mnist/', train = True, download= True, transform = transform)

train_loader = DataLoader (train_dataset, shuffle = True, batch_size = batch_size)

test_dataset = datasets.MNIST (root='./dataset/mnist/', train = False, download= True, transform = transform)

test_loader = DataLoader (test_dataset, shuffle = False, batch_size = batch_size)class ResidualBlock (torch.nn.Module):def __init__(self, channels):super (ResidualBlock, self).__init__()self.channels = channelsself.conv1 = torch.nn.Conv2d (channels, channels, kernel_size = 3, padding = 1)self.conv2 = torch.nn.Conv2d (channels, channels, kernel_size = 3, padding = 1)def forward (self, x):y = F.relu (self.conv1(x))y = self.conv2 (y)return F.relu (x + y)class Net (torch.nn.Module):def __init__(self):super (Net, self).__init__()self.conv1 = torch.nn.Conv2d (1, 16, kernel_size = 5)self.conv2 = torch.nn.Conv2d (16, 32, kernel_size=5)self.rblock1 = ResidualBlock(16)self.rblock2 = ResidualBlock(32)self.mp = torch.nn.MaxPool2d (2)self.fc = torch.nn.Linear (512, 10)def forward (self, x):in_size = x.size (0)x = self.mp (F.relu (self.conv1(x)))x = self.rblock1(x)x = self.mp (F.relu (self.conv2(x)))x = self.rblock2(x)x = x.view (in_size, -1)x = self.fc (x)return xmodel = Net()# construct loss and optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=0.01, momentum=0.5)# training cycle forward, backward, updatedef train(epoch):running_loss = 0.0for batch_idx, data in enumerate(train_loader, 0):inputs, target = dataoptimizer.zero_grad()outputs = model(inputs)loss = criterion(outputs, target)loss.backward()optimizer.step()running_loss += loss.item()if batch_idx % 300 == 299:print('[%d, %5d] loss: %.3f' % (epoch+1, batch_idx+1, running_loss/300))running_loss = 0.0def test():correct = 0total = 0with torch.no_grad():for data in test_loader:images, labels = dataoutputs = model(images)_, predicted = torch.max(outputs.data, dim=1)total += labels.size(0)correct += (predicted == labels).sum().item()print('accuracy on test set: %d %% ' % (100*correct/total))if __name__ == '__main__':for epoch in range(10):train(epoch)test()