HDFS FileSystem 导致的内存泄露

目录

一、问题描述

二、问题定位和源码分析

一、问题描述

ftp程序读取windows本地文件写入HDFS,5天左右程序 重启一次,怀疑是为OOM挂掉,马上想着就分析 GC日志了。

打印gc日志

/usr/java/jdk1.8.0_162/bin/java \\-Xmx1024m -Xms512m -XX:+UseG1GC -XX:MaxGCPauseMillis=100 \\-XX:-ResizePLAB -verbose:gc -XX:-PrintGCCause -XX:+PrintAdaptiveSizePolicy \\-XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:/hadoop/datadir/windeploy/log/ETL/DS/gc.log-`date +'%Y%m%d%H%M'` \\-classpath $jarPath com.winnerinf.dataplat.ftp.FtpUtilDS

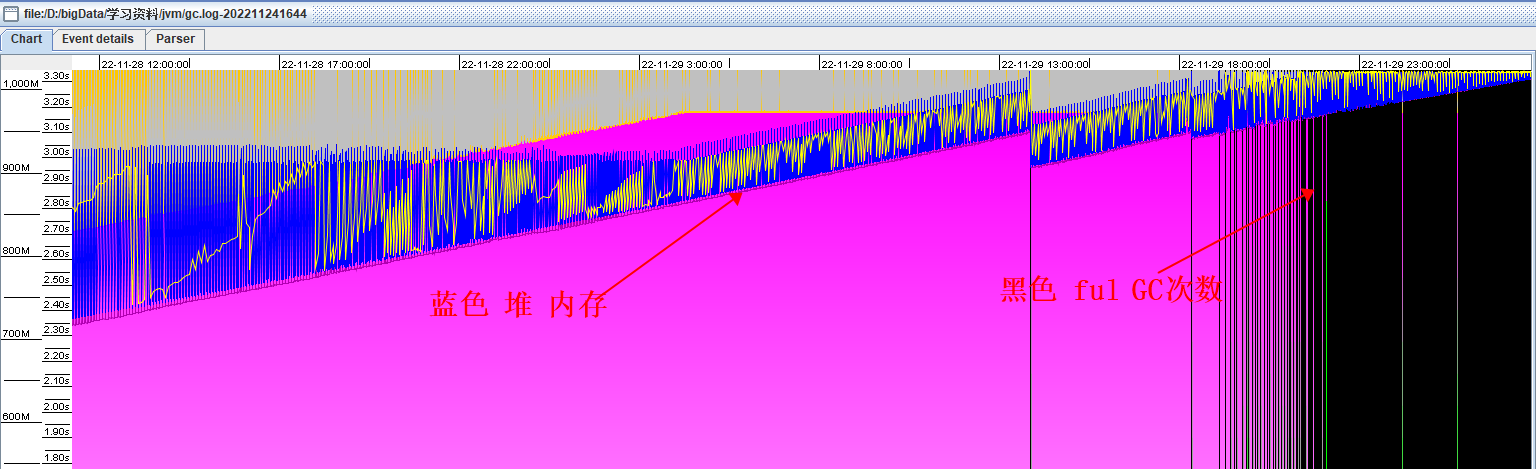

程序分配内存 1024M ,从gc日志可以看出,old区域的占用大小一直从100M上升到了1G,后面频繁的fullGc,但是释放的空间并不多,最后程序由于内存空间不足导致OOM。

我们使用 jmap 命令将该进程的 JVM 快照导出来进行分析:

jmap -dump:live,format=b,file=dump.hprof PID这个命令会在当前目录下生成一个dump.hrpof文件,这里是二进制的格式,你不能直接打开看的,其把这一时刻JVM堆内存里所有对象的快照放到文件里去了,供你后续去分析。

Memory Analyzer Mat下载地址:Eclipse Memory Analyzer Open Source Project | The Eclipse Foundation

https://kangll.blog.csdn.net/article/details/130222759?spm=1001.2014.3001.5502![]() https://kangll.blog.csdn.net/article/details/130222759?spm=1001.2014.3001.5502Mat 工具给出了两个问题:

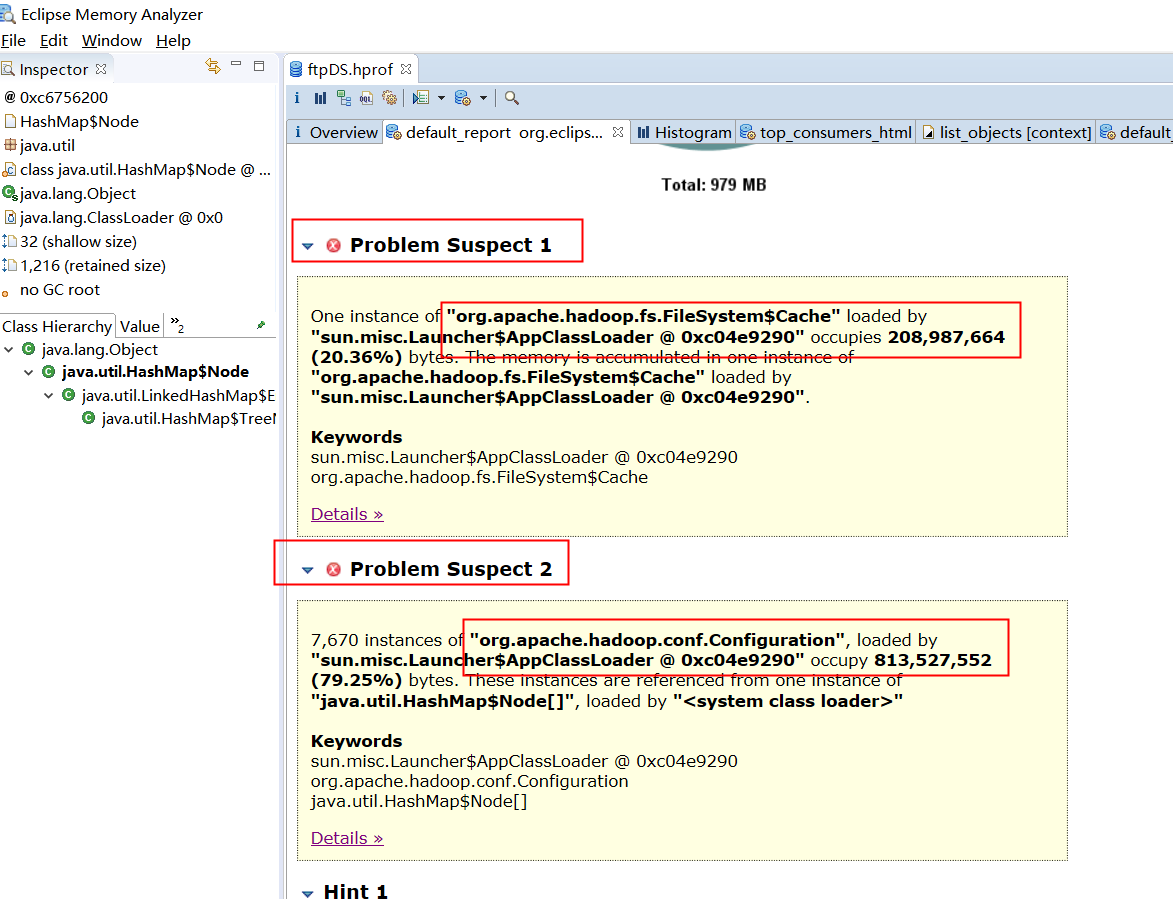

https://kangll.blog.csdn.net/article/details/130222759?spm=1001.2014.3001.5502Mat 工具给出了两个问题:

问题一

"org.apache.hadoop.fs.FileSystem$Cache"”的一个实例文件系统被"sun.misc.Launcher$AppClassLoader @ 0xc04e9290"加载。占用208,987,664字节(20.36%)。内存在“"org.apache.hadoop.fs.FileSystem$Cache"”的一个实例中累积。文件系统被程序"sun.misc.Launcher$AppClassLoader @ 0xc04e9290"加载。

问题二

7,670个“org.apache.hadoop.conf.Configuration”实例。配置”,由“sun.misc”加载。"sun.misc.Launcher$AppClassLoader @ 0xc04e9290" 占用813,527,552字节(79.25%)。这些实例是从"java.util.HashMap$Node[]",的一个实例中引用的。由"<系统类加载器>"加载。

二、问题定位和源码分析

问题的源头在于 org.apache.hadoop.fs.FileSystem 这个类,程序运行了5天, conf 类就产生了几千个实例。这些实例虽然占用的大小不大,但是每产生一个FileSystem实例时,它都会维护一个Properties对象(Hashtable的子类),用来存储hadoop的那些配置信息。hadoop的配置有几百个很正常,因此一个FileSystem实例就会对应上百个的Hashtable$Entity实例,就会占用大量内存。

为什么会有如此多的FileSystem实例呢?

以下是我们获取FIleSystem的方式:

Configuration conf = new Configuration();

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

conf.set("fs.file.impl", "org.apache.hadoop.fs.LocalFileSystem");

FileSystem fileSystem = FileSystem.get(conf);FileSystem.get底层是有使用缓存的,因此我们在每次使用完并没有关闭fileSystem,只是httpfs服务关闭时才去关闭FileSystem。

/

*

* </ol>

*/

@SuppressWarnings("DeprecatedIsStillUsed")

@InterfaceAudience.Public

@InterfaceStability.Stable

public abstract class FileSystem extends Configured

implements Closeable, DelegationTokenIssuer {public static final String FS_DEFAULT_NAME_KEY =CommonConfigurationKeys.FS_DEFAULT_NAME_KEY;public static final String DEFAULT_FS =CommonConfigurationKeys.FS_DEFAULT_NAME_DEFAULT;/* 获取这个URI的方案和权限的文件系统* 如果配置有属性{@code "fs.$SCHEME.impl.disable。缓存"}设置为true,* 将创建一个新实例,并使用提供的URI和进行初始化配置,然后返回而不被缓存* * 如果有一个缓存的FS实例匹配相同的URI,它将被退回* 否则:将创建一个新的FS实例,初始化配置和URI,缓存并返回给调用者。*/public static FileSystem get(URI uri, Configuration conf) throws IOException {String scheme = uri.getScheme();String authority = uri.getAuthority();if (scheme == null && authority == null) { // use default FSreturn get(conf);}if (scheme != null && authority == null) { // no authorityURI defaultUri = getDefaultUri(conf);if (scheme.equals(defaultUri.getScheme()) // if scheme matches default&& defaultUri.getAuthority() != null) { // & default has authorityreturn get(defaultUri, conf); // return default}}// //如果cache被关闭了,每次都会创建一个新的FileSystemString disableCacheName = String.format("fs.%s.impl.disable.cache", scheme);if (conf.getBoolean(disableCacheName, false)) {LOGGER.debug("Bypassing cache to create filesystem {}", uri);return createFileSystem(uri, conf);}return CACHE.get(uri, conf);}/ Caching FileSystem objects. */static class Cache {// ....FileSystem get(URI uri, Configuration conf) throws IOException{// key 的问题 ,详细我们看下 Key Key key = new Key(uri, conf);return getInternal(uri, conf, key);}}/*如果键映射到实例,则获取FS实例,如果没有找到FS,则创建并初始化FS。* 如果这是映射中的第一个条目,并且JVM没有关闭,那么它会注册一个关闭钩子来关闭文件系统,并将这个FS添加到{@code toAutoClose}集合中,* 如果{@code " FS .automatic。Close "}在配置中设置(默认为true)。*/private FileSystem getInternal(URI uri, Configuration conf, Key key)throws IOException{FileSystem fs;synchronized (this) {fs = map.get(key);}if (fs != null) {return fs;}fs = createFileSystem(uri, conf);final long timeout = conf.getTimeDuration(SERVICE_SHUTDOWN_TIMEOUT,SERVICE_SHUTDOWN_TIMEOUT_DEFAULT,ShutdownHookManager.TIME_UNIT_DEFAULT);synchronized (this) { // refetch the lock againFileSystem oldfs = map.get(key);if (oldfs != null) { // a file system is created while lock is releasingfs.close(); // close the new file systemreturn oldfs; // return the old file system}// now insert the new file system into the mapif (map.isEmpty()&& !ShutdownHookManager.get().isShutdownInProgress()) {ShutdownHookManager.get().addShutdownHook(clientFinalizer,SHUTDOWN_HOOK_PRIORITY, timeout,ShutdownHookManager.TIME_UNIT_DEFAULT);}fs.key = key;map.put(key, fs);if (conf.getBoolean(FS_AUTOMATIC_CLOSE_KEY, FS_AUTOMATIC_CLOSE_DEFAULT)) {toAutoClose.add(key);}return fs;}}}我们服务的fs.%s.impl.disable.cache并没有开启,因此肯定有使用cache。所以问题很可能是Cache的key判断有问题。

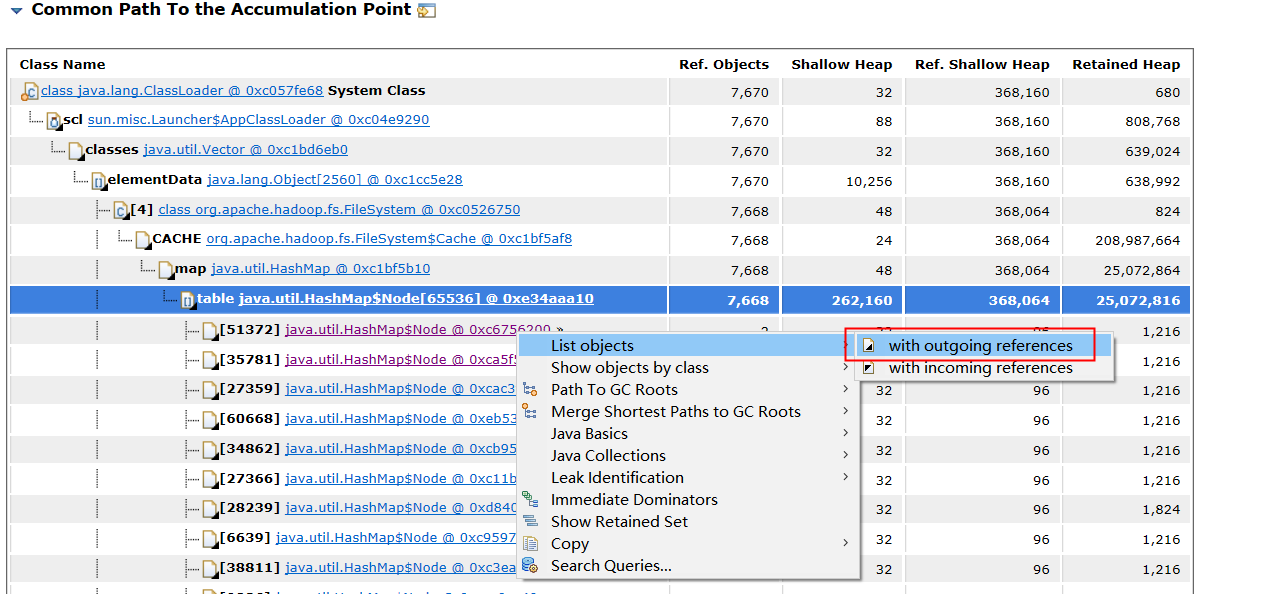

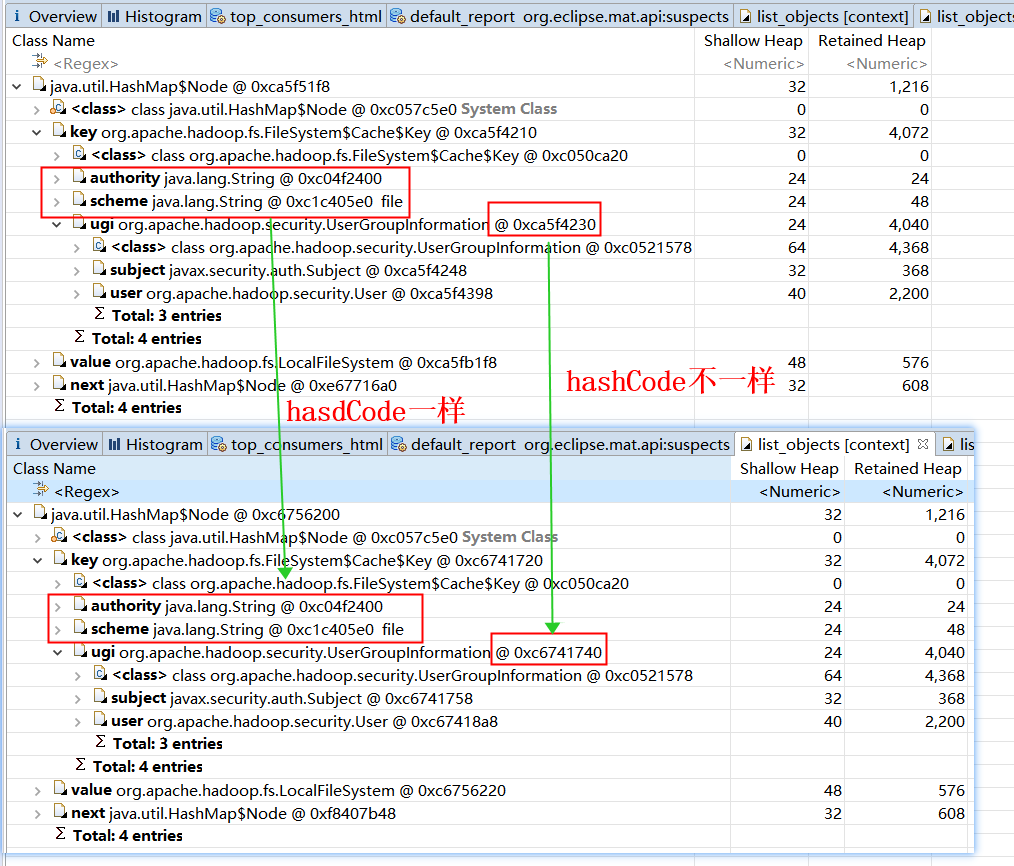

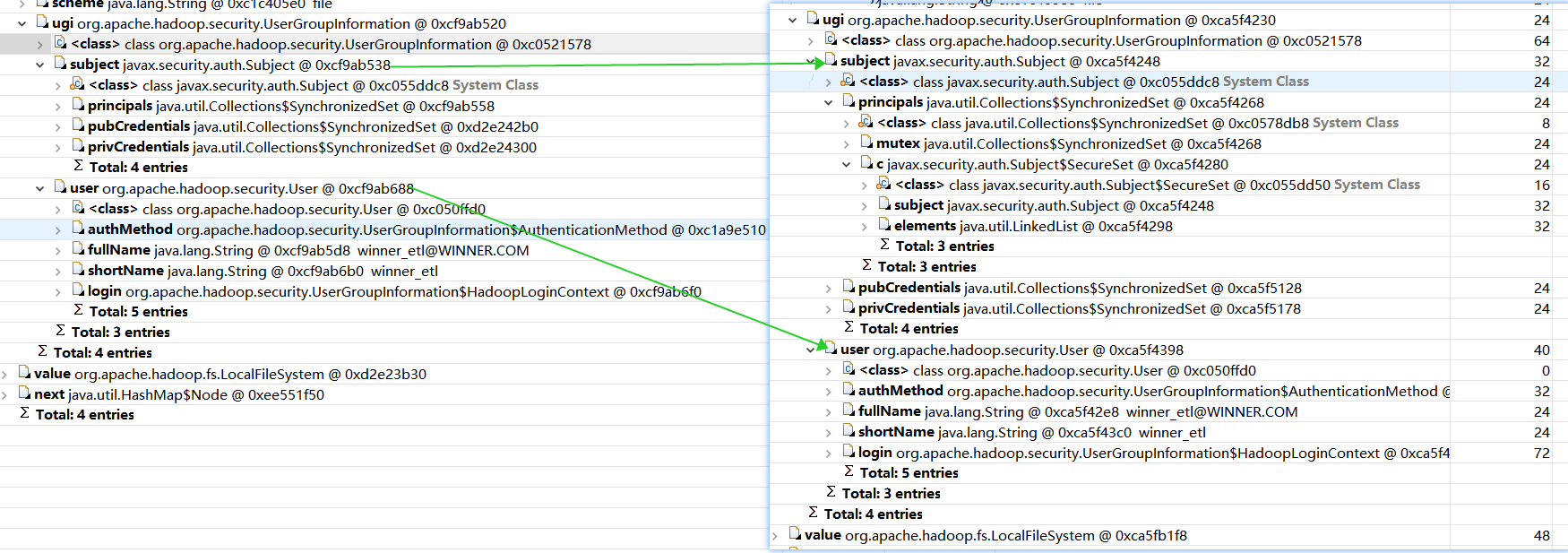

/ FileSystem.Cache.Key */static class Key {final String scheme;final String authority;final UserGroupInformation ugi;final long unique; // an artificial way to make a key uniqueKey(URI uri, Configuration conf) throws IOException {this(uri, conf, 0);}Key(URI uri, Configuration conf, long unique) throws IOException {scheme = uri.getScheme()==null ?"" : StringUtils.toLowerCase(uri.getScheme());authority = uri.getAuthority()==null ?"" : StringUtils.toLowerCase(uri.getAuthority());this.unique = unique;this.ugi = UserGroupInformation.getCurrentUser();}@Overridepublic int hashCode() {return (scheme + authority).hashCode() + ugi.hashCode() + (int)unique;}static boolean isEqual(Object a, Object b) {return a == b || (a != null && a.equals(b));}@Overridepublic boolean equals(Object obj) {if (obj == this) {return true;}if (obj instanceof Key) {Key that = (Key)obj;return isEqual(this.scheme, that.scheme)&& isEqual(this.authority, that.authority)&& isEqual(this.ugi, that.ugi)&& (this.unique == that.unique);}return false;}@Overridepublic String toString() {return "("+ugi.toString() + ")@" + scheme + "://" + authority;}}我们使用 Mat工具 看下对象对比 scheme ,authority,ugi 的 哈希值

查看外部应用对象

|

我们集群开启了kerberos配置,可以看到 UGI对象的 hashCode不一样。

我们来看下 UGI类

public static FileSystem get(final URI uri, final Configuration conf,final String user) throws IOException, InterruptedException {String ticketCachePath =conf.get(CommonConfigurationKeys.KERBEROS_TICKET_CACHE_PATH);//这里每次获取的ugi的hashcode都不一样UserGroupInformation ugi =UserGroupInformation.getBestUGI(ticketCachePath, user);return ugi.doAs(new PrivilegedExceptionAction<FileSystem>() {@Overridepublic FileSystem run() throws IOException {return get(uri, conf);}});}// UserGroupInformation.getBestUGIpublic static UserGroupInformation getBestUGI(String ticketCachePath, String user) throws IOException {if (ticketCachePath != null) {return getUGIFromTicketCache(ticketCachePath, user);} else if (user == null) {return getCurrentUser();} else {//最终走到这里return createRemoteUser(user);} }// UserGroupInformation.createRemoteUserpublic static UserGroupInformation createRemoteUser(String user, AuthMethod authMethod) {if (user == null || user.isEmpty()) {throw new IllegalArgumentException("Null user");}Subject subject = new Subject();subject.getPrincipals().add(new User(user));UserGroupInformation result = new UserGroupInformation(subject);result.setAuthenticationMethod(authMethod);return result;}

虽然使用cache,但是由于Key的判断问题,所以基本每次请求都会生成一个新的实例,就会出现内存泄露的问题。

ugi对象的不同是由于我们获取FileSystem时指定了用户有关 ,我们开启了 Kereros 而且指定了用户,根据下图的 UGI 对象可以看出每次请求都会生成一个新的实例,就会出现内存泄露的问题。。

最后我们再看下程序的DEBUG日志:

2022-12-22 09:49:35 INFO [main] (HDFS.java:384) - FileSystem is

2022-12-22 09:49:35 DEBUG [IPC Parameter Sending Thread #0] (Client.java:1122) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM sending #9 org.apache.hadoop.hdfs.protocol.ClientProtocol.getFileInfo

2022-12-22 09:49:35 DEBUG [IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM] (Client.java:1176) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM got value #9

2022-12-22 09:49:35 DEBUG [main] (ProtobufRpcEngine.java:249) - Call: getFileInfo took 1ms

2022-12-22 09:49:35 DEBUG [main] (UserGroupInformation.java:254) - hadoop login

2022-12-22 09:49:35 DEBUG [main] (UserGroupInformation.java:187) - hadoop login commit

2022-12-22 09:49:35 DEBUG [main] (UserGroupInformation.java:199) - using kerberos user:winner_spark@WINNER.COM

2022-12-22 09:49:35 DEBUG [main] (UserGroupInformation.java:221) - Using user: "winner_spark@WINNER.COM" with name winner_spark@WINNER.COM

2022-12-22 09:49:35 DEBUG [main] (UserGroupInformation.java:235) - User entry: "winner_spark@WINNER.COM"

2022-12-22 09:49:35 INFO [main] (UserGroupInformation.java:1009) - Login successful for user winner_spark@WINNER.COM using keytab file /etc/security/keytabs/winner_spark.keytab

2022-12-22 09:49:35 DEBUG [IPC Parameter Sending Thread #0] (Client.java:1122) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM sending #10 org.apache.hadoop.hdfs.protocol.ClientProtocol.getListing

2022-12-22 09:49:35 DEBUG [IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM] (Client.java:1176) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM got value #10

2022-12-22 09:49:35 DEBUG [main] (ProtobufRpcEngine.java:249) - Call: getListing took 15ms

2022-12-22 09:49:35 DEBUG [IPC Parameter Sending Thread #0] (Client.java:1122) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM sending #11 org.apache.hadoop.hdfs.protocol.ClientProtocol.getListing

2022-12-22 09:49:35 DEBUG [IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM] (Client.java:1176) - IPC Client (1861781750) connection to hdp103/192.168.2.152:8020 from winner_spark@WINNER.COM got value #11

2022-12-22 09:49:35 DEBUG [main] (ProtobufRpcEngine.java:249) - Call: getListing took 10ms

2022-12-22 09:49:35 INFO [main] (HDFS.java:279) - Get a list of files and sort them by access time!

2022-12-22 09:49:35 INFO [main] (FtpUtilDS.java:192) - ----------- not exist rename failure file ---------------程序DEBUG 日志 也显示每次IPC 遍历HDFS的文件的时候kerberos 还会进行一次登录。虽然fileSystem使用cache,但是由于Key的判断问题,所以基本每次请求都会生成一个新的实例,就会出现内存泄露的问题。

可以得出如果指定了用户,每次都会构造一个新的Subject,因此计算出来的UserGroupInformation的hashcode不一样。这样也最终导致FileSystem的Cache不生效。

基于以上的分析,我将过去的旧代码做稍微修改:

Configuration conf = null;

FileSystem fileSystem = null;....

finally {if (fileSystem != null) {fileSystem.close();conf.clear();}}我们再看 程序运行了 两个多月没有重启,查看 堆内存发现 在新生代大部分的对象被回收,而且老年代占用内存持续维持在 10% 以下,至此问题解决。