手撕/手写/自己实现 BN层/batch norm/BatchNormalization python torch pytorch

计算过程

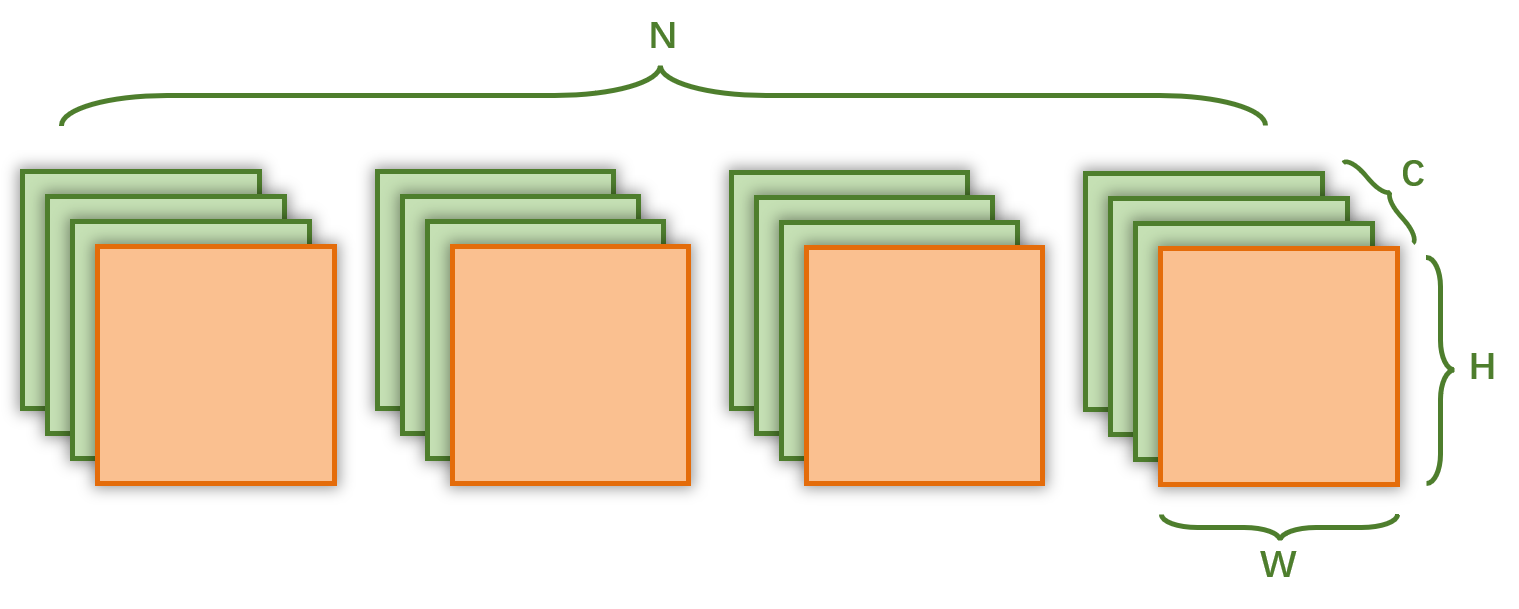

在卷积神经网络中,BN 层输入的特征图维度是 (N,C,H,W), 输出的特征图维度也是 (N,C,H,W)

N 代表 batch size

C 代表 通道数

H 代表 特征图的高

W 代表 特征图的宽

我们需要在通道维度上做 batch normalization,

在一个 batch 中,

使用 所有特征图 相同位置上的 channel 的 所有元素,计算 均值和方差,

然后用计算出来的 均值和 方差,更新对应特征图上的 channel , 生成新的特征图

如下图所示:

对于4个橘色的特征图,计算所有元素的均值和方差,然后在用于更新4个特征图中的元素(原来元素减去均值,除以方差)

代码

def my_batch_norm_2d_detail(features, eps=1e-5):'''这个函数的写法是为了帮助理解 BatchNormalization 具体运算过程实际使用时这样写会比较慢'''n,c,h,w = features.shapefeatures_copy = features.clone()running_var = torch.randn(c)running_mean = torch.randn(c)for ci in range(c):# 分别 处理每一个通道mean = 0 # 均值var = 0 # 方差_sum = 0 # 对一个 batch 中,特征图相同位置 channel 的每一个元素求和for ni in range(n): for hi in range(h):for wi in range(w):_sum += features[ni,ci, hi, wi]mean = _sum / (n * h * w) running_mean[ci] = mean_sum = 0# 对一个 batch 中,特征图相同位置 channel 的每一个元素求平方和,用于计算方差 for ni in range(n): for hi in range(h):for wi in range(w):_sum += (features[ni,ci, hi, wi] - mean) 2var = _sum / (n * h * w )running_var[ci] = _sum / (n * h * w - 1)# 更新元素for ni in range(n): for hi in range(h):for wi in range(w):features_copy[ni,ci, hi, wi] = (features_copy[ni,ci, hi, wi] - mean) / torch.sqrt(var + eps) return features_copy, running_mean, running_varif __name__ == "__main__":torch.set_printoptions(precision=7)torch_bn = nn.BatchNorm2d(4) # 设置 channel 数torch_bn.momentum = Nonefeatures = torch.randn(4, 4, 2, 2) # (N,C,H,W)torch_bn_output = torch_bn(features) my_bn_output, running_mean, running_var = my_batch_norm_2d_detail(features) print(torch.allclose(torch_bn_output, my_bn_output))print(torch.allclose(torch_bn.running_mean, running_mean))print(torch.allclose(torch_bn.running_var, running_var))注意事项

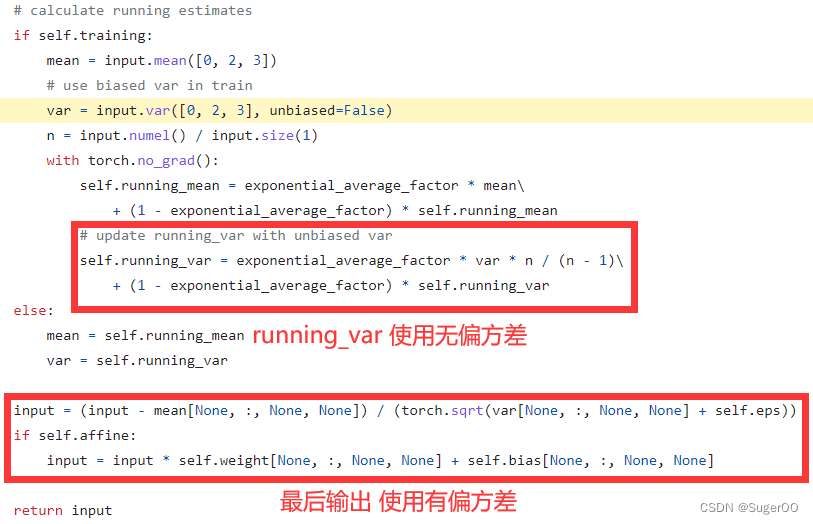

方差计算

需要注意的是,在训练的过程中,方差有两种不同的计算方式,

在训练时,用于更新特征图的是 有偏方差

而 running_var 的计算,使用的是 无偏方差

相关链接

官方人员手写BN

"""

Comparison of manual BatchNorm2d layer implementation in Python and

nn.BatchNorm2d@author: ptrblck

"""import torch

import torch.nn as nndef compare_bn(bn1, bn2):err = Falseif not torch.allclose(bn1.running_mean, bn2.running_mean):print('Diff in running_mean: {} vs {}'.format(bn1.running_mean, bn2.running_mean))err = Trueif not torch.allclose(bn1.running_var, bn2.running_var):print('Diff in running_var: {} vs {}'.format(bn1.running_var, bn2.running_var))err = Trueif bn1.affine and bn2.affine:if not torch.allclose(bn1.weight, bn2.weight):print('Diff in weight: {} vs {}'.format(bn1.weight, bn2.weight))err = Trueif not torch.allclose(bn1.bias, bn2.bias):print('Diff in bias: {} vs {}'.format(bn1.bias, bn2.bias))err = Trueif not err:print('All parameters are equal!')class MyBatchNorm2d(nn.BatchNorm2d):def __init__(self, num_features, eps=1e-5, momentum=0.1,affine=True, track_running_stats=True):super(MyBatchNorm2d, self).__init__(num_features, eps, momentum, affine, track_running_stats)def forward(self, input):self._check_input_dim(input)exponential_average_factor = 0.0if self.training and self.track_running_stats:if self.num_batches_tracked is not None:self.num_batches_tracked += 1if self.momentum is None: # use cumulative moving averageexponential_average_factor = 1.0 / float(self.num_batches_tracked)else: # use exponential moving averageexponential_average_factor = self.momentum# calculate running estimatesif self.training:mean = input.mean([0, 2, 3])# use biased var in trainvar = input.var([0, 2, 3], unbiased=False)n = input.numel() / input.size(1)with torch.no_grad():self.running_mean = exponential_average_factor * mean\\+ (1 - exponential_average_factor) * self.running_mean# update running_var with unbiased varself.running_var = exponential_average_factor * var * n / (n - 1)\\+ (1 - exponential_average_factor) * self.running_varelse:mean = self.running_meanvar = self.running_varinput = (input - mean[None, :, None, None]) / (torch.sqrt(var[None, :, None, None] + self.eps))if self.affine:input = input * self.weight[None, :, None, None] + self.bias[None, :, None, None]return input# Init BatchNorm layers

my_bn = MyBatchNorm2d(3, affine=True)

bn = nn.BatchNorm2d(3, affine=True)compare_bn(my_bn, bn) # weight and bias should be different

# Load weight and bias

my_bn.load_state_dict(bn.state_dict())

compare_bn(my_bn, bn)# Run train

for _ in range(10):scale = torch.randint(1, 10, (1,)).float()bias = torch.randint(-10, 10, (1,)).float()x = torch.randn(10, 3, 100, 100) * scale + biasout1 = my_bn(x)out2 = bn(x)compare_bn(my_bn, bn)torch.allclose(out1, out2)print('Max diff: ', (out1 - out2).abs().max())# Run eval

my_bn.eval()

bn.eval()

for _ in range(10):scale = torch.randint(1, 10, (1,)).float()bias = torch.randint(-10, 10, (1,)).float()x = torch.randn(10, 3, 100, 100) * scale + biasout1 = my_bn(x)out2 = bn(x)compare_bn(my_bn, bn)torch.allclose(out1, out2)print('Max diff: ', (out1 - out2).abs().max())